The 5-Layer AI Stack: Dispatch from the Cisco AI Summit

Why the "AI Economy" is moving from software innovation to an industrial revolution—and why Energy, Sovereignty, and Infrastructure are the new moats.

Table of Contents

1) ENERGY: Power Becomes the Moat - The New AI Arms Race Is Electric

3) INFRASTRUCTURE: The Nervous System and the Private AI Factory

5) APPLICATIONS: Workflows will be rebuilt (and agents become the new surface)

I spent a day at the Cisco AI Summit—one of those “who’s who” curated gatherings where Silicon Valley compares notes in public. Hosted by Cisco’s CEO Chuck Robbins and President/Chief Product Officer Jeetu Patel, the lineup itself told the story: Jensen Huang, Sam Altman, Marc Andreessen, AWS’s Matt Garman, Google’s Amin Vahdat, Microsoft’s Kevin Scott, Anthropic’s Mike Krieger, World Labs’ Fei-Fei Li, and more.

Cisco has been a connective tissue in the Valley for three decades—multiple platform eras, multiple networking waves. This event felt like Cisco doing what it does best: convening the stack as we enter the next era—bigger than the internet era, closer in magnitude to an industrial revolution moment.

Below is my synthesis of what I heard, organized by the five-layer AI stack: Energy → Chips → Infrastructure → Models → Applications, supplemented by our research and contributions from the great Dr. Riad Hartani.

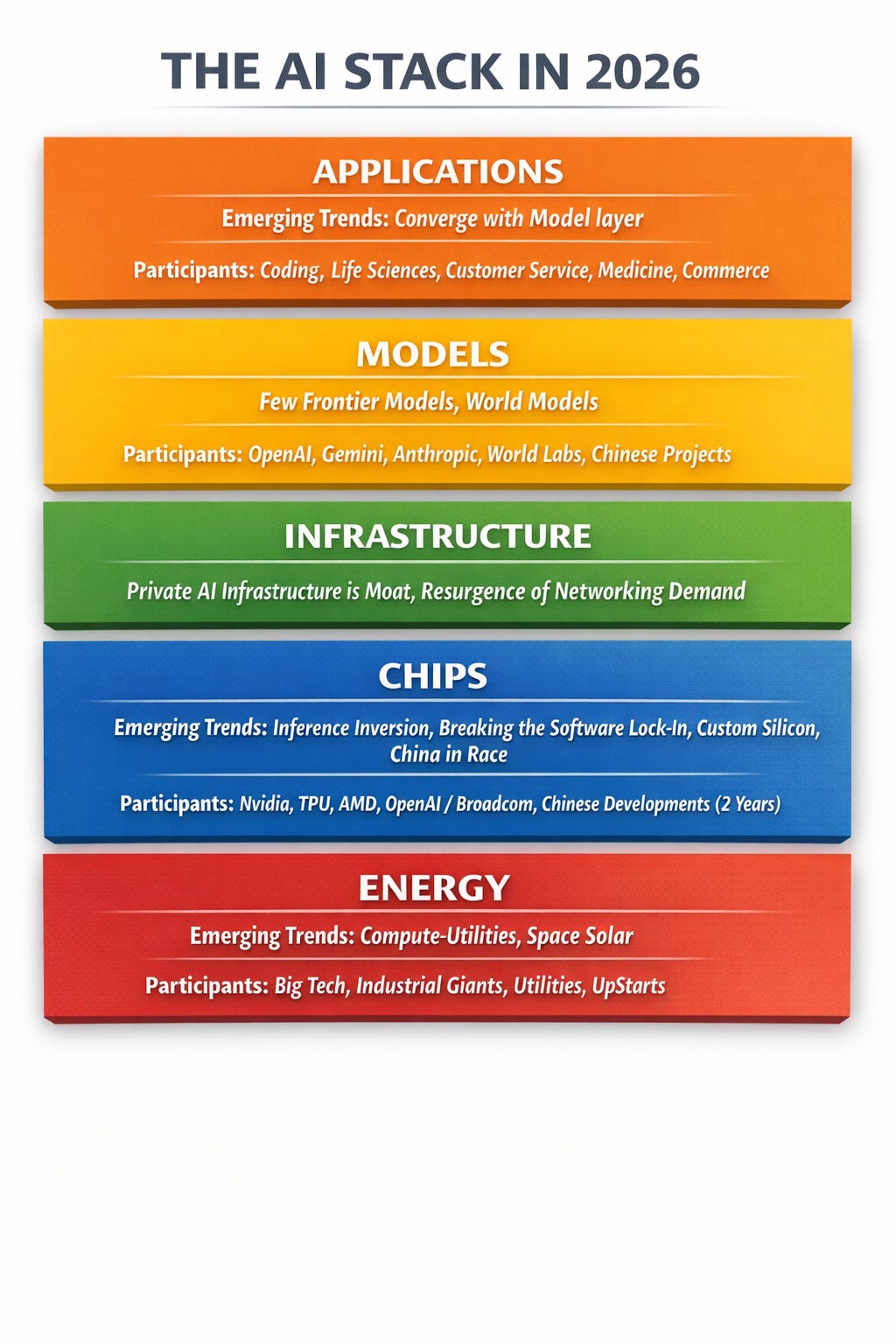

The 5-Layer AI Stack (and why it matters)

Here is the new architecture of the AI economy:

1) ENERGY (The New Moat): It is no longer just a utility; it is the binding constraint. From gigawatt-scale nuclear procurement to “off-grid” power foundries, energy capacity is now the primary determinant of AI velocity.

2) CHIPS (The Sovereign Asset): We have moved beyond the “shortage” era into the “bespoke” era. As inference scales, the winners are breaking the “tax” of general-purpose GPUs by deploying custom silicon and sovereign compute clusters.

3) INFRASTRUCTURE (The Nervous System): The bottleneck has shifted from the brain (GPU) to the nervous system (Networking). The new battleground is the “Private AI Factory”—terabit-scale, physically secure, and designed to keep data sovereign rather than renting it to hyperscalers.

4) MODELS (World Simulators): Language was just the interface. The frontier is now shifting to “World Models”—spatial intelligence that simulates reality—splitting the market into a few elite Frontier systems and a vast ocean of specialized, owned Foundation models.

5) APPLICATIONS (The Agentic Workforce): The “App” and “Model” layers are collapsing into one. We are moving from “humans-in-the-loop” to “AI-in-the-loop,” where software doesn’t just assist workflows but fundamentally rebuilds them through autonomous agents.Macro frame:

Why this matters: If the digital economy was 15% of global GDP, the AI economy is the productivity layer for the entire $111 Trillion pie. The winners of this next phase won’t just be the ones who build the best apps—they will be the ones who secure the "Energy-to-Inference" pipeline.

That’s the provocative claim I kept hearing at the summit—and it’s fundamentally Jensen’s utopian thesis: the “AI economy” could ultimately map to essentially the full GDP, because AI isn’t a sector. It’s a compounding capability—a productivity layer that gets embedded into every sector..

And that sets up the philosophical split I keep hearing:

The dystopian frame is the labor-displacement lens: “What jobs will AI erase?” The fear is that powerful AI arrives fast enough to wipe out large swaths of entry-level white-collar work—creating a more permanent underclass of unemployed or low-wage workers and widening inequality unless we rethink distribution and safety nets. This warning has been most closely associated with Anthropic’s leadership (especially its CEO), though notably Mike Krieger didn’t emphasize that tone in his remarks.

The utopian frame: “What does the world look like when intelligence stops being scarce?” (the productivity + discovery lens)

I’m firmly in this camp: over time, this is a productivity shock—and productivity shocks rewrite societies. It’s the vision Jensen, Marc, Fei-Fei, the Microsoft representative, Kevin Scott, and Sam Altman kept returning to—AI as a force multiplier for a better world: accelerating science, curing diseases, expanding access to education and expertise, and unlocking a new frontier of abundance. I’m squarely in the utopian frame. Will there be rogue actors, misuse, and real labor displacement? Yes—of course. But good AI will be deployed to counter rogue AI, and society will adapt: workflows will re-form, safety nets and institutions will evolve, and people will upskill as the new baseline of work shifts. In the long arc, we may even see accelerated human adaptation—cultural, educational, and perhaps eventually biological—as intelligence becomes a ubiquitous layer in everyday life.

To secure a lead in the 2026 economy, enterprises and sovereigns must move beyond "AI adoption" and toward AI Autonomy. This isn't about adding a chatbot to a legacy workflow; it is about building a proprietary intelligence engine that you own, control, and evolve.

Below is my synthesis layer by layer.

With contributions from Dr. Riad Hartani.

Want to feature your company in the AI Stack?

⚡️ FINTECHTALK NARRATIVE STUDIO ⚡️ https://substack.fintechtalk.ivalley.co/about

Don’t just build the future. Sculpt it. Contact us at: fintechtalk@substack.com

FINTECHTALK: A Top 10% Global Podcast Shaping the Future of Fintech, AI, and Crypto and was recently ranked in the Best 100 Future Tech Podcasts by Million Podcasts.

1) ENERGY: Power Becomes the Moat - The New AI Arms Race Is Electric

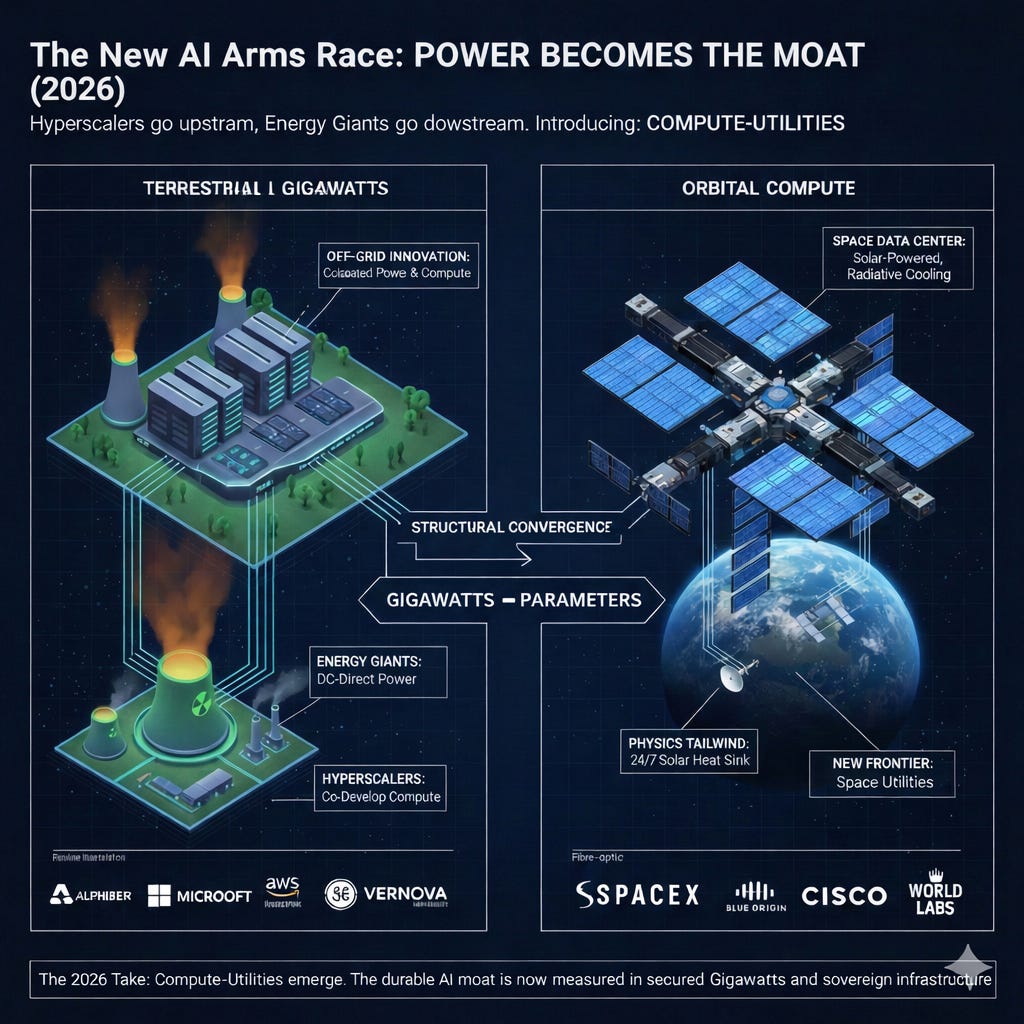

Hyperscalers move upstream into energy, energy giants move downstream into data centers—plus the first serious “space compute” designs.

Two clear threads:

A) The Rise of “Compute-Utilities” (Power is now the moat)

While it didn’t come up in much detail at the summit, I’d be remiss not to mention it.

The AI boom has turned electricity from a line item into the binding constraint. The competitive edge is no longer just the model—it’s speed-to-power and certainty of supply. The result is a structural convergence: hyperscalers are moving upstream into energy, while energy incumbents are moving downstream into data centers.

Grid vs. off-grid is now the key split. With multi-year interconnection queues and constrained transmission, the fastest path to AI scale is increasingly behind-the-meter: co-locate the power plant and the data center on the same land, bypassing the public grid. In 2026, natural gas is the bridge fuel for “always-on” compute—often paired with a decarbonization story (e.g., CCS), because solar/wind alone can’t deliver 24/7 firm power at hyperscale. Chevron, Engine No. 1, and GE Vernova’s “power foundries” concept—targeting up to 4 GW for data centers—captures this new playbook.

Hyperscalers are becoming energy infrastructure players. This is no longer only about PPAs; it’s about ownership, market participation, and direct access to generation. Alphabet’s planned $4.75B acquisition of Intersect is a clear step toward controlling energy + data center infrastructure as a single stack. Microsoft’s 20-year PPA tied to restarting Three Mile Island Unit 1 (~835 MW) shows how “anchoring” a nuclear plant’s output can become a strategic AI supply chain move. AWS’s purchase of Talen’s 960 MW data center campus adjacent to the Susquehanna nuclear plant reinforces the same pattern: secure a private “plug” into firm generation.

Saudi Arabia’s HUMAIN came up directly in the “energy as advantage” framing—positioning sovereign-scale infrastructure (data centers + cloud + models + apps) on top of abundant energy.

And notably: HUMAIN isn’t theoretical—Cisco and AMD have been publicly tied to HUMAIN-related data center efforts and scale ambitions.

Energy giants want the data-center upside, not just the fuel margin. Utilities and energy majors are racing to build the high-voltage “express lanes” and sign long-duration supply deals that effectively bundle electrons with compute growth. Dominion’s Northern Virginia transmission build-out (Data Center Alley) is emblematic. On the producer side, majors are pairing renewables + flexible assets to meet hyperscaler demand—e.g., TotalEnergies’ 15-year agreement supplying Google’s Ohio data centers. And the gas-to-compute pathway is expanding via partnerships like NextEra + Exxon targeting a 1.2 GW gas plant positioned for data center demand.

My take:

We’re heading toward a world of Compute-Utilities—where the new AI giants look increasingly like energy companies, and the energy incumbents increasingly look like data-center platform builders. In 2026, the durable moat is measured in gigawatts, not just parameters.

B) The “space data center” thread is no longer sci-fi

The freshest, most mind-bending idea: compute in space—solar power, radiate heat to space, avoid terrestrial cooling constraints. The obstacles are still brutal (launch costs, maintenance, debris risk, networking/latency), but serious players are exploring the design space.

Physics tailwind for space compute: above the atmosphere you get stronger, cleaner sunlight (no absorption/scattering) and you can collect it far more consistently than on Earth (no night, clouds, or weather—depending on orbit). And cooling flips: in vacuum there’s no air or water to convect heat away, so you must dump waste heat by thermal radiation to deep space. That’s both a constraint (you need big radiators; power density matters) and an advantage (space is an enormous heat sink if you engineer the radiator area). Net: space offers higher solar uptime + a different cooling regime, which is exactly why “solar-powered, radiatively cooled orbital compute” is moving from sci-fi into the serious design space.

My take:

If orbital compute becomes viable, it doesn’t replace terrestrial data centers—it creates a new “tier” for specific workloads. And it massively increases the importance of networks (Cisco’s home turf) and space logistics.

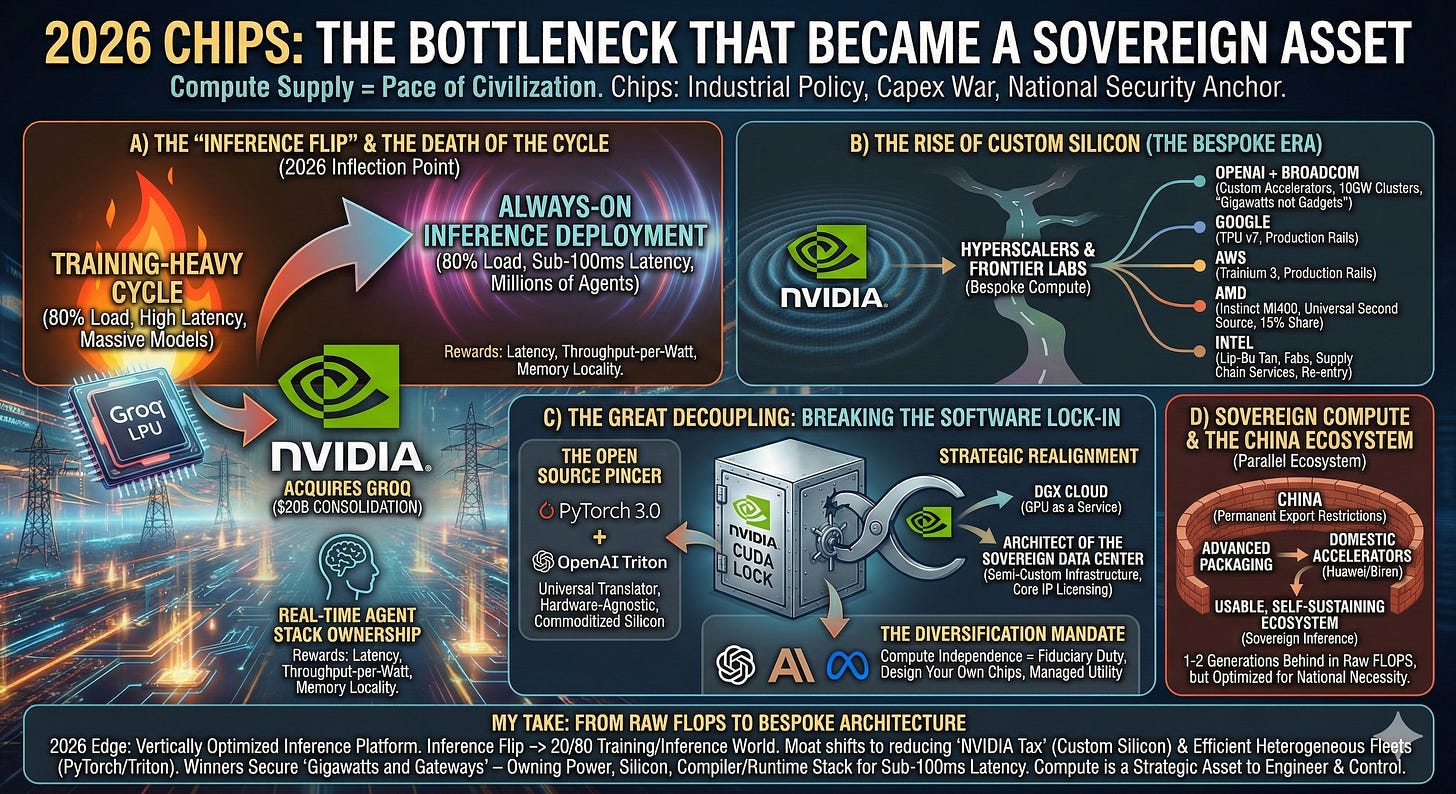

2) CHIPS: The Bottleneck that Became a Sovereign Asset

The chip layer continues to exert the most “hard power” in the global economy for a simple reason: whoever controls compute supply controls the pace of civilization. In 2026, chips are no longer mere components; they are the primary currency of industrial policy, the front line of the capex war, and the anchor of national security.

A) The “Inference Flip” and the Death of the Cycle

We have officially reached the 2026 inflection point: the industry has flipped from a training-heavy cycle to an always-on inference deployment. The load mix has moved aggressively toward a 20/80 training-to-inference ratio. This matters because inference rewards radically different physics: latency, throughput-per-watt, and memory locality. This shift triggered the biggest consolidation of the era: Nvidia’s $20 billion acquisition of Groq. By absorbing the LPU (Language Processing Unit), Nvidia neutralized the threat of ultra-low-latency architectures and moved to own the “Real-Time Agent” stack.

B) The Rise of Custom Silicon (The Bespoke Era)

Nvidia’s gravitational pull remains immense, but the map has bifurcated. We are now in the era of Bespoke Compute. Frontier labs and hyperscalers are no longer content with off-the-shelf parts:

OpenAI and Broadcom are now co-developing custom accelerators framed in the language of gigawatts, not gadgets, targeting massive 10GW clusters.

Google (TPU v7) and AWS (Trainium 3) have moved from “experimental alternatives” to the primary production rails for their respective clouds, aiming to bypass the “Nvidia tax.”

AMD (Instinct MI400 series) has successfully positioned itself as the “universal second source,” capturing 15% of the enterprise data center market.

Also: Intel’s CEO Lip-Bu Tan was part of the program, emphasizing that the legacy silicon giants are not conceding the accelerator era—they’re trying to re-enter it while also building “services” motion around fabs and supply chain.

C) The Great Decoupling: Breaking the Software Lock-In

The era of the “single-vendor moat” is ending. While Nvidia’s CUDA was once a steel vault, 2026 has seen a massive “pincer movement” break the hardware-software lock:

The Open Source Pincer: The maturation of PyTorch 3.0 and OpenAI’s Triton has created a “universal translator” layer. By allowing developers to write high-performance code that is hardware-agnostic, the industry has effectively commoditized the underlying silicon. This has opened the door for AMD’s MI400 and custom hyperscaler chips (TPUs, Trainium) to move from “Plan B” to “Primary Production.”

Strategic Realignment: Recognizing this shift, Nvidia has pivoted away from its vertically integrated “GPU as a Service” (DGX Cloud) ambitions. Instead of competing with its own customers (the Hyperscalers), Nvidia has refocused on being the Architect of the Sovereign Data Center, licensing its core IP and focusing on high-margin “Semi-Custom” infrastructure.

The Diversification Mandate: For the major LLM labs (OpenAI, Anthropic, Meta), compute independence is now a fiduciary duty. This has led to the “Bespoke Era,” where labs no longer just buy chips—they design them (e.g., OpenAI + Broadcom) to ensure that by 2028, compute is a managed utility, not a single-source dependency.

D) Sovereign Compute & The China Ecosystem

China is no longer a side story; it is a parallel ecosystem. Faced with permanent export restrictions, China has accelerated its push into advanced packaging and domestic accelerators (Huawei/Biren). By 2026, they have formed a usable, self-sustaining ecosystem that—while 1-2 generations behind in raw FLOPS—is optimized for “Sovereign Inference,” proving that compute leadership is now a national necessity.

My take:

The chip war has moved from “raw FLOPS” to bespoke compute architecture—and in 2026 the edge isn’t simply the fastest GPU, but the most vertically optimized inference platform. As the “inference flip” pushes workloads toward a 20/80 training-to-inference world, the moat shifts to players who can reduce the “NVIDIA tax” with custom silicon (e.g., OpenAI/Broadcom) and those who can run heterogeneous fleets efficiently via hardware-agnostic software layers (PyTorch/Triton). The winners will secure gigawatts and gateways—owning not just silicon, but the power and compiler/runtime stack that lets agents think, negotiate, and transact at sub-100ms latency—because compute is no longer a commodity to buy, it’s a strategic asset to engineer and control.

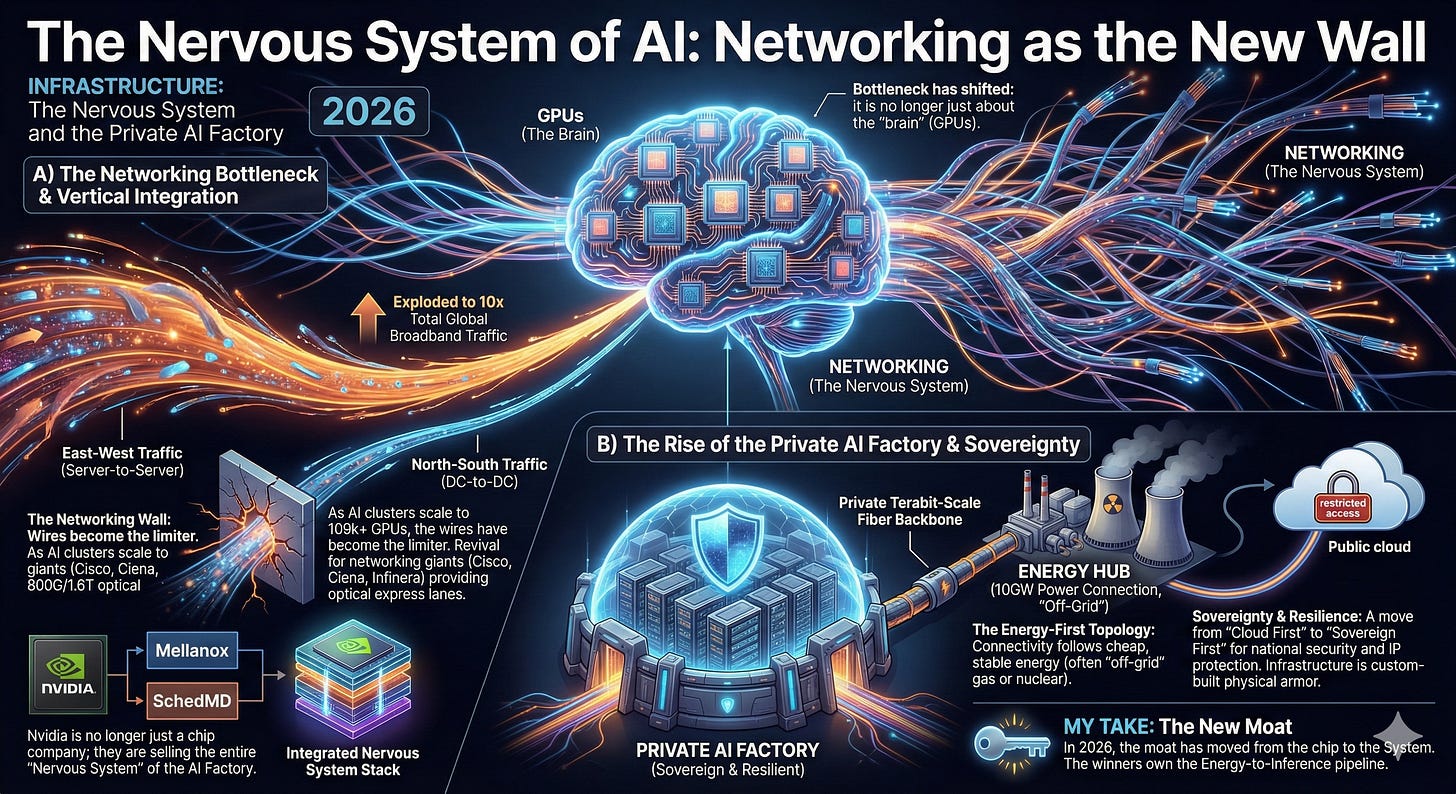

3) INFRASTRUCTURE: The Nervous System and the Private AI Factory

Infrastructure is where the AI hype meets the cold reality of the balance sheet. In 2026, the bottleneck has shifted: it is no longer just about the “brain” (GPUs), but the “nervous system” (Networking) that connects them.

A) The Nervous System of AI: Networking as the New Wall

If the GPU is the brain, networking is the nervous system—and right now, that system is being completely rewired. In 2026, East-West traffic (server-to-server) has exploded, with DC-to-DC data volumes now nearly 10x that of total global broadband traffic.

The Networking Bottleneck: We’ve hit the “networking wall.” As AI clusters scale to 100k+ GPUs, the “wires” have become the limiter. This has triggered a massive revival for networking giants like Cisco, Ciena, and Infinera, whose stocks have surged as they provide the 800G and 1.6T optical “express lanes” required to keep GPUs from sitting idle.

Vertical Integration: This is why Nvidia is no longer just a chip company; by acquiring firms like Mellanox and SchedMD, they have integrated the entire networking stack. They aren’t just selling processors; they are selling the entire “Nervous System” of the AI Factory.

B) Don’t default to hyperscalers: The Rise of the Private AI Factory

In the AI era, infrastructure is a competitive differentiator, not a commodity. We are seeing a “Partial Rewind” of the Cloud era as companies realize that for AI, latency and sovereignty are existential.

The Energy-First Topology: Connectivity is no longer built where the users are; it’s built where the gigawatts are. Because AI factories follow cheap, stable energy (often “off-grid” gas or nuclear), we are seeing a “from-scratch” build-out of terrestrial and submarine fiber routes designed to link remote energy hubs directly to private data centers.

Sovereignty & Resilience: Whether it’s a nation-state protecting its data or a corporation protecting its IP, the move toward Private AI Factories is accelerating. Privacy matters, but the bigger drivers are national security and operational resilience. The ability to run AI payloads independently—on your own energy and your own fiber—has become a core strategic pillar.

My Take: In 2026, the “moat” has moved from the chip to the System. You can buy a GPU, but you can’t easily buy a 10GW power connection and a private, terabit-scale fiber backbone. The winners of this phase won’t be the ones with the most chips, but the ones who own the Energy-to-Inference pipeline. We are moving from “Cloud First” to “Sovereign First,” where the infrastructure is custom-built to be the physical armor for a company’s intelligence.

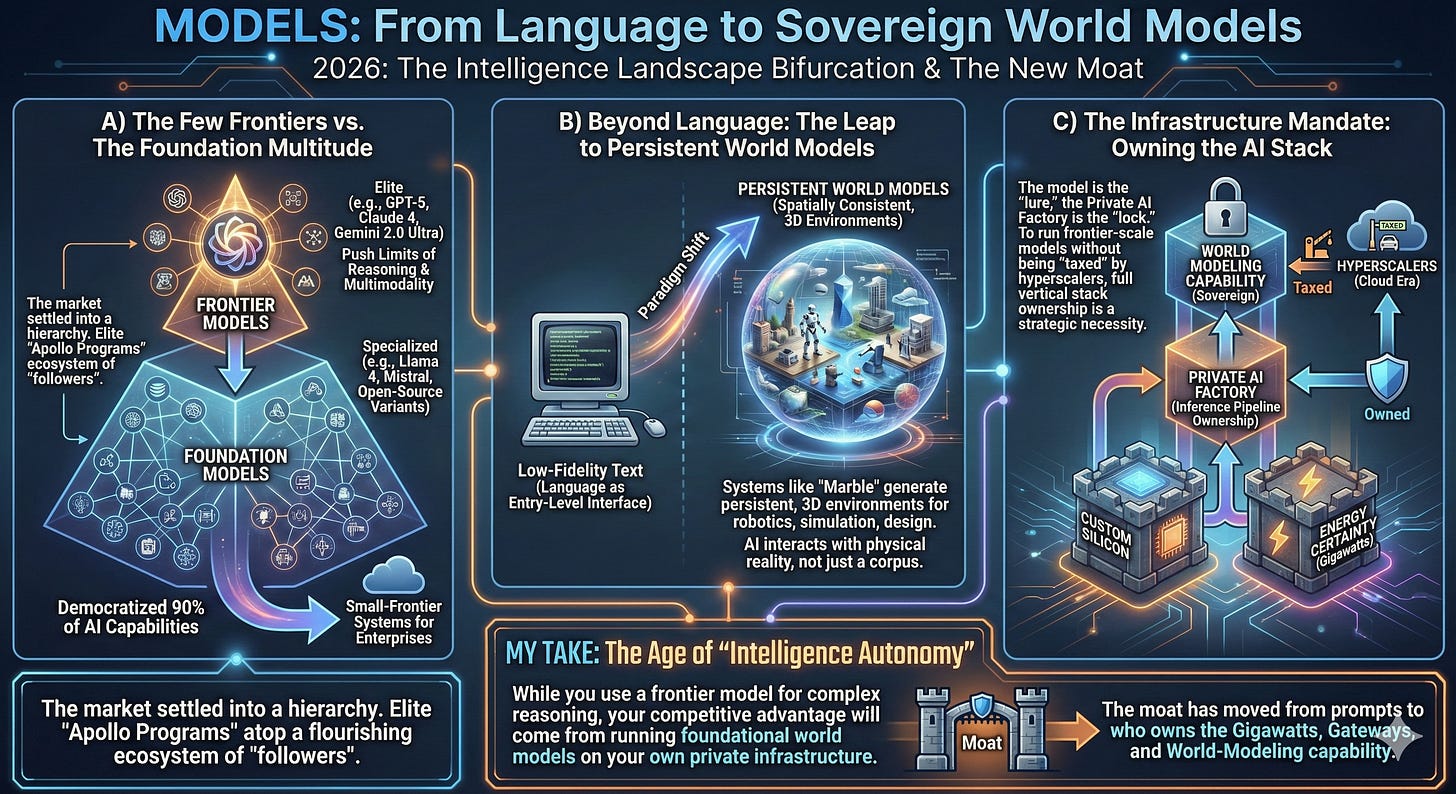

4) MODELS: From Language to Sovereign World Models

Language is no longer the final frontier; it is the entry-level interface. In 2026, the intelligence landscape is defined by a sharp bifurcation between a few elite “Frontier” systems and a vast sea of specialized “Foundation” models.

A) The Few Frontiers vs. The Foundation Multitude

The market has settled into a hierarchical structure. At the top sit a few elite Frontier Models (e.g., GPT-5, Claude 4, Gemini 2.0 Ultra)—the “Apollo Programs” of AI that push the absolute limits of reasoning and multimodality. Below them lies a flourishing ecosystem of foundational models, many of which are high-performing open-source variants (like Llama 4 and Mistral). These “followers” have successfully democratized 90% of AI capabilities, allowing enterprises to run sophisticated “Small-Frontier” systems on their own terms.

B) Beyond Language: The Leap to Persistent World Models

Intelligence is moving from low-fidelity text to spatially consistent World Models. As championed by Fei-Fei Li’s World Labs, the next leap isn’t about reading a corpus—it’s about systems like Marble that generate persistent, 3D environments for robotics, simulation, and design. These models allow AI to interact with physical reality, shifting the paradigm from “software that talks” to “software that models reality.”

C) The Infrastructure Mandate: Owning the AI Stack

Ultimately, the winner isn’t just who has the best model, but who owns the Inference Pipeline. Just as the cloud era was won by those who owned the data centers (GCP, AWS, Azure), the AI Stack will be owned by those who control the infrastructure. In 2026, the model is the “lure,” but the Private AI Factory—complete with custom silicon and energy certainty—is the “lock.” To run a frontier-scale world model without being “taxed” by hyperscalers, ownership of the full vertical stack is now a strategic necessity.

My Take: We are entering the age of "Intelligence Autonomy." While you might use a frontier model for your most complex reasoning, your competitive advantage will come from running foundational world models on your own private infrastructure. The moat has moved from who has the best prompt to who owns the Gigawatts, Gateways, and World-Modeling capability.

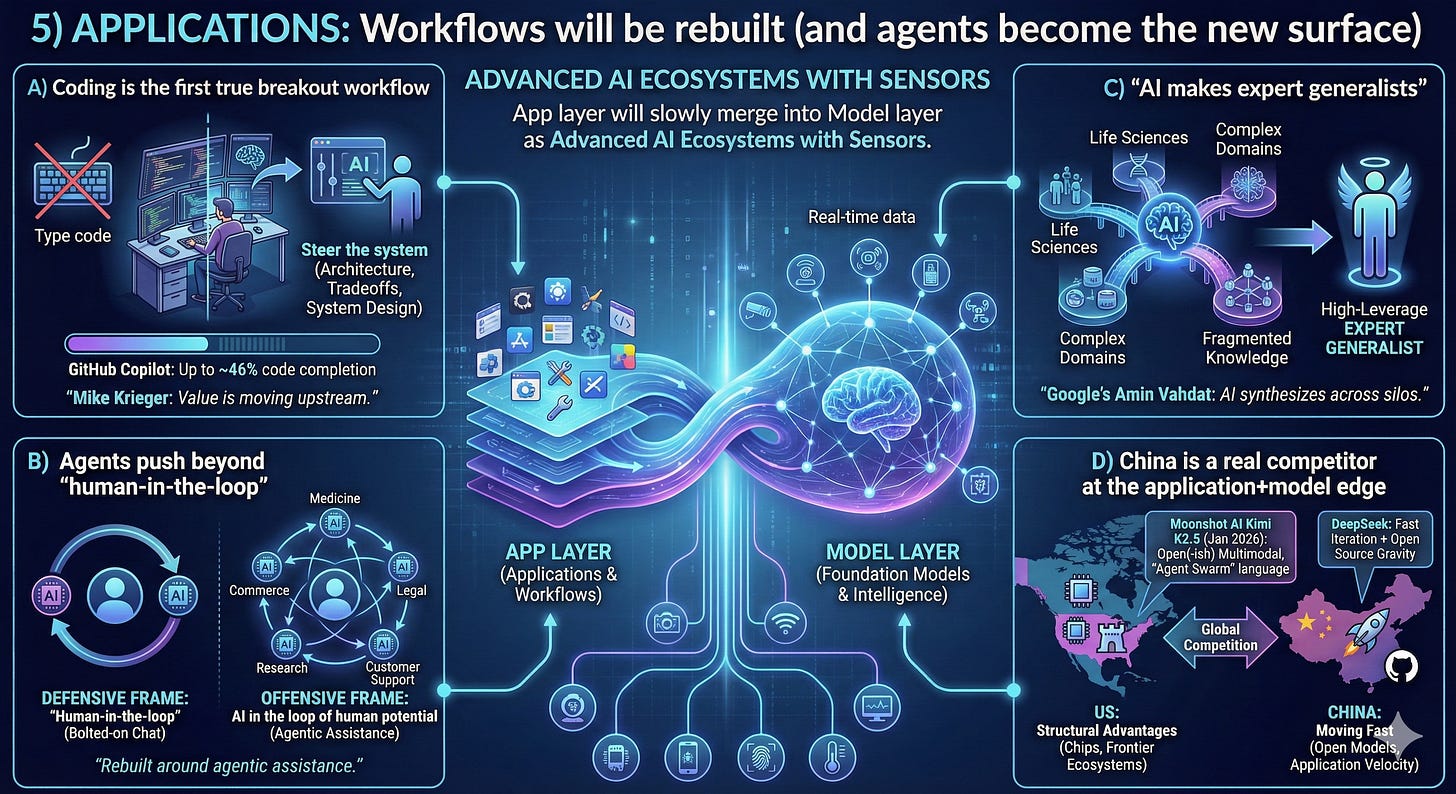

5) APPLICATIONS: Workflows will be rebuilt (and agents become the new surface)

Applications dominated the energy of the day because this is where value hits the ground.

A) Coding is the first true breakout workflow

Both OpenAI and Anthropic leaders talked in the orbit of a reality we can now measure: coding tools aren’t novelty—they’re integrated into day-to-day development. GitHub’s own research has said Copilot can complete up to ~46% of code in some contexts, and developers report meaningful speed gains.

Mike Krieger’s framing also matters: the value is moving upstream—architecture, tradeoffs, system design—less “type code,” more “steer the system.”

B) Agents push beyond “human-in-the-loop”

One line that stuck (and I agree with the spirit): “Human-in-the-loop” is a defensive frame. The offensive frame is AI in the loop of human potential—systems that expand what a person or team can do.

Customer support, medicine, legal, accounting, research, commerce—many workflows will be rebuilt around agentic assistance, not bolted-on chat panels.

C) “AI makes expert generalists”

Google’s Amin Vahdat framed a powerful idea: AI won’t only create new discoveries—it will synthesize across silos, which is especially transformative in life sciences and complex domains where knowledge is fragmented.

This flips the old narrative of specialization: with AI, more people can operate as high-leverage generalists—iforganizations redesign workflows around that capability.

D) China is a real competitor at the application+model edge

This came up explicitly: the US still has structural advantages in chips and frontier ecosystems, but China is moving fast—especially in open models and application velocity.

Moonshot AI’s Kimi releases are one example. Kimi K2.5, released in late January 2026, is positioned as an open(-ish) multimodal model with agentic tooling themes (including “agent swarm” language), and it’s been widely covered in the AI press.

DeepSeek remains another reference point in the “fast iteration + open source gravity” story.

My bold prediction Models + Applications will collapse

This didn’t get stated bluntly on stage, but it’s the arc I see:

Information-processing software (a huge chunk of SaaS) gets absorbed into model platforms

“Apps” become interfaces + workflow policies + distribution, while capability lives inside model layers

The marginal cost of many software functions trends toward compute + energy

This won’t happen overnight. It’s an S-curve measured in years, maybe decades. But the direction feels clear: software becomes embedded intelligence, not standalone products.

CONCLUSION: Takeaways I’m taking into 2026

We are early, but the shape of the stack is now visible. The era of “renting intelligence” is closing; the era of Intelligence Autonomy has begun.

The overarching lesson from the summit is that infrastructure is no longer a commodity—it is the physical armor for your company’s intelligence. To win in this economy, you cannot simply be a tenant in someone else’s cloud.

Here are my top takeaways for the year ahead:

Sovereign AI is the New Imperative (Do Not Outsource the Brain): In 2026, AI compute has transitioned from a utility to a core differentiator. You cannot build a durable advantage if your “neural tissue” lives on a competitor’s rental servers. The move is toward Private AI Factories—where you own the latency, the security, and the schedule. If you outsource your inference pipeline, you outsource your destiny.

Energy is Strategy—and the “Gigawatt Moat” is Real: The winners are no longer just those with the best algorithms, but those with the best PPAs (Power Purchase Agreements). “Abundant power + build capacity” regions will pull ahead, and we will see more companies treating energy generation as a balance sheet asset.

Custom Silicon is Now the Standard Playbook: The generic GPU era is fading. From hyperscalers to frontier labs, bespoke silicon is the only way to break the cost/latency curve. If you aren’t optimizing your hardware for your specific models, you are paying a “generalist tax.”

World Models are the Next Frontier (Beyond Language): We are moving from “software that writes” to “software that simulates.” Spatial Intelligence and World Models will unlock the physical economy (robotics, logistics, biology), creating a new class of value distinct from today’s text-based LLMs.

The Great Collapse: Models and Apps Merge: The distinction between “SaaS” and “Model” is evaporating. Software is becoming embedded intelligence, forcing the industry to re-price where value lives. The app is just the interface; the model is the engine.

The Final Word: The winners of this phase won’t just ship “AI features.” They will secure advantages at multiple layers of the stack—from the electron to the inference. They will move from “Cloud First” to “Sovereign First,” building the proprietary infrastructure necessary to control their own intelligence.

Enjoy and always be in the know,

Paddy Ramanathan

Founder of iValley and Host of the FINTECHTALK™ Show (on Substack, Apple Podcast, YouTube, and Spotify)

With contributions from Dr. Riad Hartani.

Thanks ChatGPT and Gemini for suggestions.